F1 Score Vs Auc

Comparison of Precision Recall F1 - Score and ROC AUC

Precision TP TP FP. Recall Sensitivity - Recall is the ratio of correctly predicted positive observations to the all observations in actual class F1 score - F1 Score is the weighted average of Precision and Recall. Therefore this score takes both false positives and false negatives into account.F1 score. is the harmonic mean of precision and sensitivity The ROC curve was first developed by electrical engineers and radar engineers during World War II for detecting The ROC is also known as a relative operating characteristic curve because it is a comparison of two operating characteristicsPrecision-Recall curves summarize the trade-off between the true positive rate and the positive Update Oct 2019 Updated ROC Curve and Precision Recall Curve plots to add labels use a F-Measure or F1 score that calculates the harmonic mean of the precision and recall harmonic mean

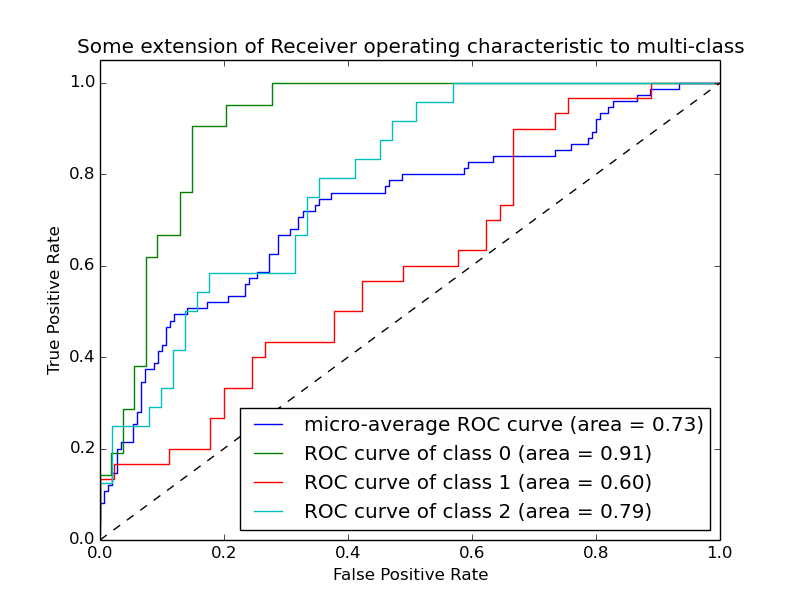

Precision and Recall. Let me introduce two new metrics if you have F1 Score. Now if you read a lot of other literature on Precision and Recall you cannot avoid the other measure F1 which is a function of Precision and Recall. In-depth comparison between the optimization algorithms implemented.sklearn.metrics.roc auc score y true y score average macro sample weight None max fpr None multi class raise labels None source . Compute Area Under the Receiver Operating Characteristic Curve ROC AUC from prediction scores. Note this implementation can beThey are not as straightforward as Accuracy F1-score and I always used to feel that I am missing the key purpose of it. In this article I would like to address that concern and share with my fellow readers about what ROC Curve and AUC values are and how it needs to be used towards evaluating the

average weighted print Precision precision score y test weighted prediction F1 score usr local lib python2.7 site-packages sklearn metrics classification.py 676 DeprecationWarning The default weighted averaging is deprecated and from version 0.18 use of precision recall orI am training ML logistic classifier to classify two classes using python scikit-learn. They are in an extremely imbalanced data about 14300 1 . I m getting almost 100 accuracy and ROC-AUC but 0 in precision recall and f1 score.F-Score From Precision and Recall F-Measure is computed and used as metrics sometimes. F - Measure is nothing but the harmonic mean of It is evident from the ROC AUC curve diagram that the area between ROC and the axis is 0.5. This is still not the worst model but it makes a random

A comparative analysis of the ML algorithms has been performed based on a few performance metrics such as accuracy recall and precision F1-score AUC-ROC curve and AUC-PR curve. Gradient Boosting classifier has outperformed all the other algorithms with an accuracy of 92.4 recall of 94.4Precision-recall curves are often zigzag curves frequently going up and down. Therefore precision-recall curves tend to cross each other For diagnostic tests you would first of all like to know the sensitivity recall because you want to be sure that the test identifies the vast majority ofInterpret the results of your classification using Receiver Operating Characteristics ROC and Precision-Recall PR Curves in Python with There are obviously a few outliers such as negative samples that our model gave a high score and positive samples with a low score. If we set a

Accuracy Precision Recall true positive rate F1-Score ROC AUC Area Under Curve - . AUC Area Under Curve .Comparison of the roc auc scores of predicting models using dierent data pre-processing strategies. Source publication.accuracy values score logreg roc auc score y true data score logreg .values . dt precision sel dt.apply lambda x 0 if x recall 0.7 else x precision axis 1 .

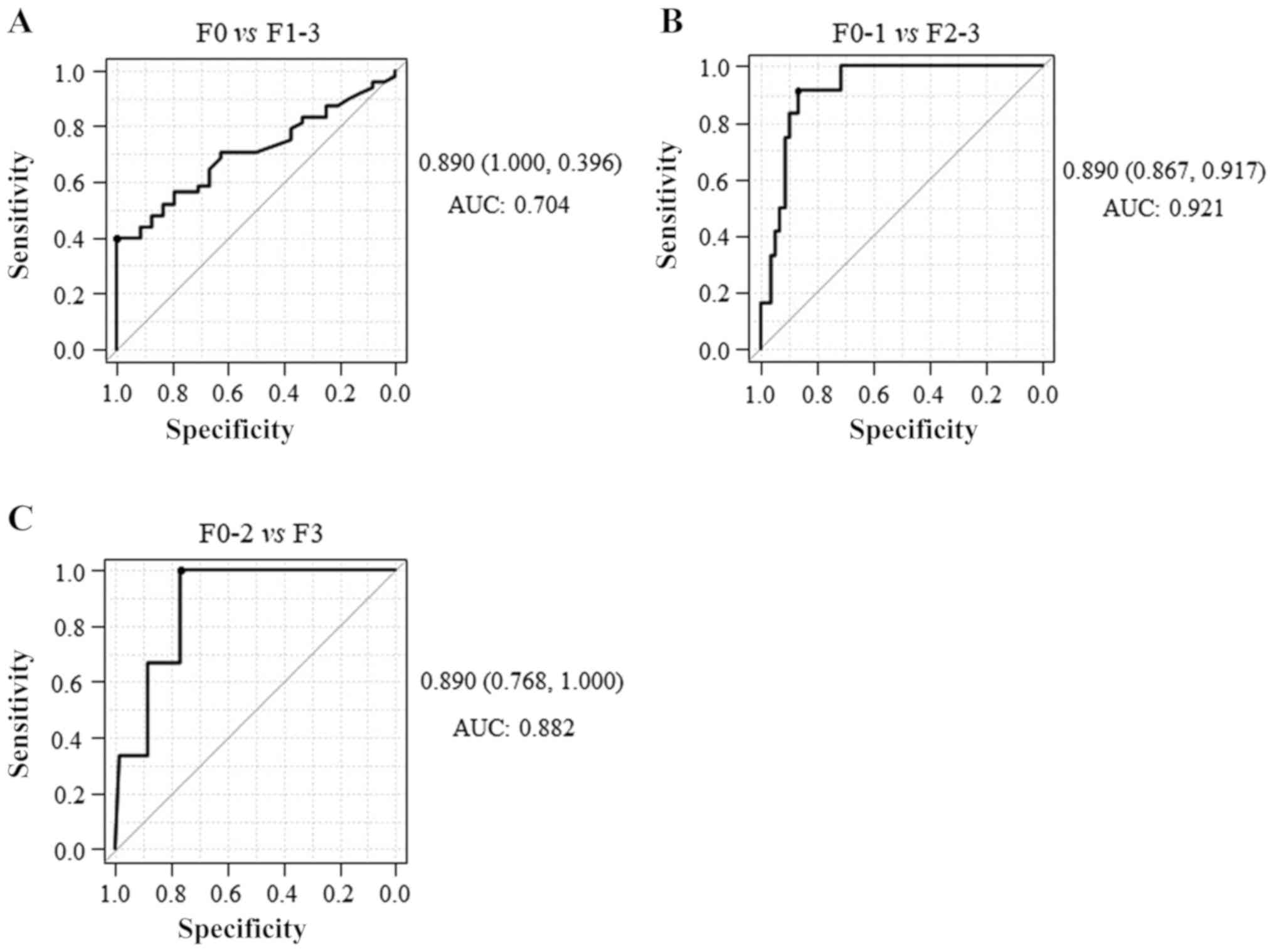

In the area of machine learning the frequently used evaluation metrics include accuracy ACC Recall Precision F1-score F1 and Matthews correlation coefficient MCC which are respectively calculated by the following formulas The ROC and the precision-recall curves were displayed in Figure 6

F1 Score vs ROC AUC vs Accuracy vs PR AUC Which

F1 Score Formula

F1 Score Formula

ROC curve AUC F1 - score - MinMin s Dev Story

PDF Establishment of prediction models for lung cancer

Model Accuracy i2tutorials

Credit Card Fraud Detection using Autoencoders in H2O by

The Enhanced Liver Fibrosis ELF score Normal values

Comparison of serum fibrosis biomarkers for diagnosing